| |

Difficult to develop embedded vision? Definitely!

Try imagine running complex vision processing algorithms in an embedded system with limited performance while giving equal consideration to power, size, cost, and development life cycle. It is not an easy task indeed. For industrial or automotive scenarios that demand a higher level of timeliness and reliability, vision processing must be both fast and accurate. There is no room for error. Now that the age of AI has arrived, isn't it also time to adjust our approach to machine learning? Embedded vision developers, among others, are certainly under pressure to make some breakthroughs.

People are however always looking for simple answers to complex questions. In terms of embedded vision, a number of good solutions that make life easier for developers already exist.

Let's start with hardware. A single processor architecture is certainly an easier starting point, but developers will inevitably have to accept a compromise among performance, flexibility, and expandability. That was the situation until Xilinx introduced the brand new FPGA SoC architecture, Zynq—a heterogeneous processing unit that contains an embedded processing system (PS) and programmable logic (PL), and caught everyone's attention.

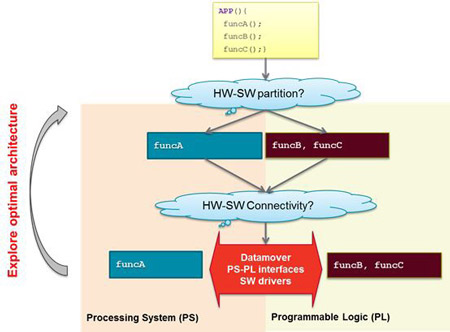

Embedded vision developers can assign computing tasks to either of the two systems as needed and enjoy the flexibility. Accelerating high performance vision processing on PL, as expected, delivers a better performance than handling it on a general purpose CPU. As PL is hardware programmable, developers can reuse developed IPs or use algorithms to compile their own custom IP to achieve unique vision processing effects.

To better support the development of high performance vision processing applications, Xilinx introduced the Zynq Ultrascale+ MPSoC. Compared to the previous generation, the device offers improved performance and specific optimization for real-time vision processing. A look at the core resources of Zynq Ultrascale+ MPSoC will make it clear.

• The four ARM Cortex-A53 CPUs provide impressive computing power and support operating systems with complex functions,

such as Linux.

• The two ARM Cortex R5F real-time processing units (RPUs) can work in lockstep or independent mode. The lockstep mode

can be used in scenarios with more rigorous security requirements.

• The Mali-400 GPU is used for 2D/3D graphics display and supports high quality video output.

It would not be an exaggeration to say Zynq Ultrascale+ MPSoC, with abundant hardware resources that enable developers to work much more efficiently, is a device designed exclusively for embedded vision.

Fig. 1: Zynq Ultrascale+ MPSoC targets embedded vision applications (Image source: Xilinx)

Having an adequate hardware architecture that satisfies requirements, however, is not the same as being able to master the development of embedded video. Developers usually believe that the ability to write HDL codes in specific hardware languages is a must if one wishes to properly utilize FPGAs. That was in itself difficult enough, let alone the more complex FPGA+CPU heterogeneous system in Zynq Ultrascale+ MPSoC.

Xilinx is prepared to address this concern. After launching Zynq, it also developed a “software defined” tool kit to make FPGA SoC development easier—the SDSoC.

An easier way of understanding SDSoC is that it combines development tools for FPGA SoCs and resource libraries in one standardized development environment. The complex design and development process that previously required various teams, including system architecture, hardware design, and software development, to coordinate and work together on iterative computation again and again to complete is replaced with a more automated model that not only simplifies the work, but improves efficiency.

The core vision of SDSoC is to enable more developers with little or no FPGA design experience to, without writing a single line of RTL code, use a high-level programming language and experience the power of programmable hardware, and work in collaboration with general purpose processing units. With regard to the development of embedded vision, developers who use SDSoCs are now no longer burdened by complex low-level programming, and can devote their time and energy to sophisticated, systemic factors that increase product differentiation and added value, such as algorithm optimization.

Fig. 2: A typical SDSoC development process (Image source: Xilinx)

While it is clear that SDSoC significantly increases the development efficiency of embedded vision hardware platforms based on Zynq Ultrascale+ MPSoC, we’ve still got a long way to go because the market will always be coming up with new demands. For example, your product may become obsolete tomorrow if you don’t consider adding a machine learning feature to your embedded vision system today.

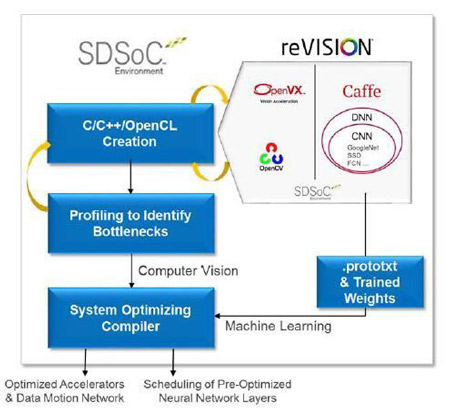

To pursue innovation in design, the traditional approach to vision processing will have to change. Developers have to move from spending time and energy on handling HDL code optimization on their own to taking advantage of existing certified and well developed IP resources and using software definitions to achieve vision acceleration. The Xilinx reVISION stack is one such system—one that combines all elements necessary for implementing the new approach.

The reVISION stack contains a platform with abundant resources, algorithms, and application development resources that supports some of the most popular neural networks, including AlexNet, GoogLeNet, SqueezeNet, SSD, and FCN. The stack furthermore provides library elements, including predefined and optimized embodiment of Convolutional Neural Networks (CNN) which is necessary for building custom neural networks (deep neural network (DNN)/(CNN)).

The machine learning element furthermore works with an extensive series of acceleration supporting OpenCV features to satisfy computer vision processing requirements. To facilitate application development, Xilinx supports industrial frameworks such the machine learning-oriented Caffe and computer vision-oriented OpenVX. The reVISION stack also contains a development platform and various sensors provided by Xilinx and its business partners.

The development process is extremely easy in reVISION. In the SDSoC development environment, software engineers and system designers can use the reVISION hardware as a target and call a large amount of acceleration ready computer vision libraries to the development process for fast construction and application.

According to Xilinx, the Xilinx FPGA can help users seeking to develop vision processing using traditional RTL processes complete 20% of the work; users have to deal with the remaining 80% themselves. reVISION-based development, on the other hand, can help users complete 80% of the work, which means users only have to deal with the remaining 20%. The latter process is vastly more efficient.

Fig. 3: The reVISION software defined design process (Image source: Xilinx)

In conclusion, the Zynq Ultrascale+ MPSoC hardware architecture optimized specifically for video processing, an easy-to-use SDSoC development environment that doesn’t require extensive experience, and the reVISION stack with its abundant resources form the three-piece kit for embedded vision development, reducing the workload for developers and improving design efficiency. Aren't we, as embedded vision developers, lucky to have access to these tools?

Fig. 4: The Avnet MicroZed embedded vision development kit, together with reVISION, gives developers complete embedded vision design and development support

▲TOP

|

|

|