As embedded vision becomes prevalent, which processors are the most viable?

A list of the fastest-growing advanced technologies in the last decade will inevitably include embedded vision. Many instances of embedded vision applications in everyday life can be readily found even without the support of market research. For example:

• We can withdraw cash from an ATM using facial recognition, which is becoming the mainstream identification method.

• We are installing an increasingly greater number of cameras onboard our cars to help give drivers a better sense of their

surroundings and to ultimately achieve autonomous driving.

• Homes are now typically fitted with security systems. Developers are also considering adding vision recognition to smart

refrigerators and other household appliances.

• Our mobile phones are equipped with cameras that, in addition to photography, can be used in a number of innovative ways,

such as AR gaming.

• As soon as you walk into an unmanned convenience store, hundreds of cameras start recording and analyzing your every move.

They may even have a better idea of what you need than you do.

Behind every camera is an embedded vision system that is constantly observing and analyzing the world around us.

Embedded vision, as the name implies, means realizing computer vision and applications in an embedded system. The constantly expanding reach of embedded vision applications in the last decade is primarily due to progress in processor technologies that have enabled us to obtain sufficient hardware computing power and run complex vision algorithms in embedded scenarios.

In practice, however, many challenges yet remain in the design and development of embedded vision systems, including power, size, and cost. One of the greatest challenges lies in the fragmentation of embedded applications. Client specifications for embedded vision can vary substantially, and, without a set of universal standards, corresponding vision processing algorithms often change with time. Developers, meanwhile, usually prefer to try to optimize their algorithms so as to create a competitive advantage through differentiation.

This uncertainty can be an opportunity in disguise for embedded processor manufacturers as the field is wide open until one player succeeds in dominating the market. This is also the reason behind the immense number of different architectures for processors used in embedded vision. For developers, this means that careful consideration is necessary in choosing an embedded vision processor that presents itself as the best and most suitable solution.

Fig. 1: The Avnet Nuvoton-based N32926 online camera solution offers a small size at a low cost that is an excellent match to China's surveillance market where orders tend to be large.

ASIC/ASSP chips

Can implement software algorithms on hardware circuits, and display a clear advantage in performance. If manufactured in sufficient volumes, they also offer a price-performance ratio that’s hard to beat. However, their disadvantage is equally clear—a lack of flexibility. Adaptation to fast-changing embedded vision applications can be slow in terms of development life cycle and R&D costs. Hence, except for well-developed applications produced in large quantities, embedded vision developers tend to err on the side of caution when deciding whether to use ASIC/ASSP.

General-purpose processors (GPPs)

An alternative architecture for embedded vision, are unlike ASIC/ASSP in that they use programming to run different algorithms on a standard architecture, and are highly flexible and easy to develop given their simple system architecture. The support offered by a better developed ecosystem also makes it easier to transfer algorithms to GPPs. Vision algorithms, however, often generate a huge amount of data, and the low memory bandwidth in a GPP can become a performance bottleneck and result in its inability to handle processes involving large amounts of data. While GPPs continue to improve in terms of technological specifications, their architecture is not necessarily suitable for embedded vision applications with high performance demands.

GPUs and DSPs

Will be used by developers in place of GPPs in vision processing for specific purposes. For example, the excellent parallel computing capability of GPUs makes them the perfect choice for 3D graphics processing. As both GPUs and DSPs can be programmed to run different algorithms, both offer flexibility. Nevertheless, both are also devices with “stronger” characteristics. It is therefore difficult for either to form a complete vision processor on its own despite their respective advantages, meaning they must be integrated with general purpose CPUs, auxiliary processors and other circuits to form complex heterogeneous processing units. Such complexity naturally increases development difficulty.

A classic example of heterogeneous processing units is the mobile application processor (AP) , which is usually built with the necessary hardware resources for vision processing. Appearance and energy efficiency are typically optimized to the max to accommodate the requirements of battery-powered portable devices. Stronger software development support has also enabled the design of embedded vision solutions and products using mobile APs. Given the fact that certain specific purpose auxiliary processors integrated into APs do not support programming, however, their use in embedded vision applications will remain limited.

For developers who wish to strike a balance between the performance and flexibility of embedded vision systems, there is indeed one unique vision processing architecture that may be worth a closer look: the FPGA SoC. One example is the Xilinx Zynq platform.

This is a heterogeneous processing unit that contains an embedded CPU (PS) and programmable logic (PL). For developers, its significance lies in that different tasks can be assigned to PS and PL depending on actual vision processing requirements. For example, graphics intensive tasks with higher performance demands will be assigned to PL, while those that don’t involve key or system processes will be handled by PS. The result can then be optimized, thus striking a perfect balance between performance and energy efficiency. FPGA companies are also continuing to introduce compatible development tools, software, and algorithm libraries to help developers adopt this new process architecture.

In conclusion, embedded vision has been an area worth watching and investing in over the last ten years, and it will remain so in the next decade. Profit will not be far behind once an application scenario is developed to the full and the right embedded data processing solutions are identified.

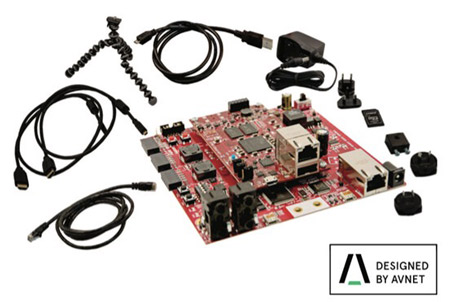

Fig. 2: The Avnet Xilinx FPGA SoC based MicroZed embedded vision development kit

▲TOP

|